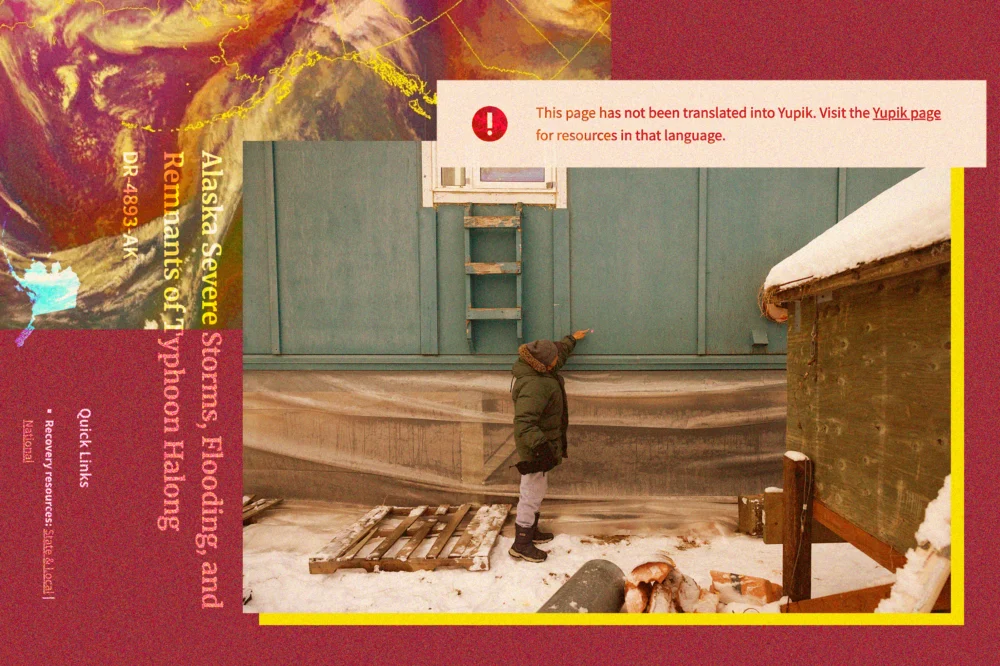

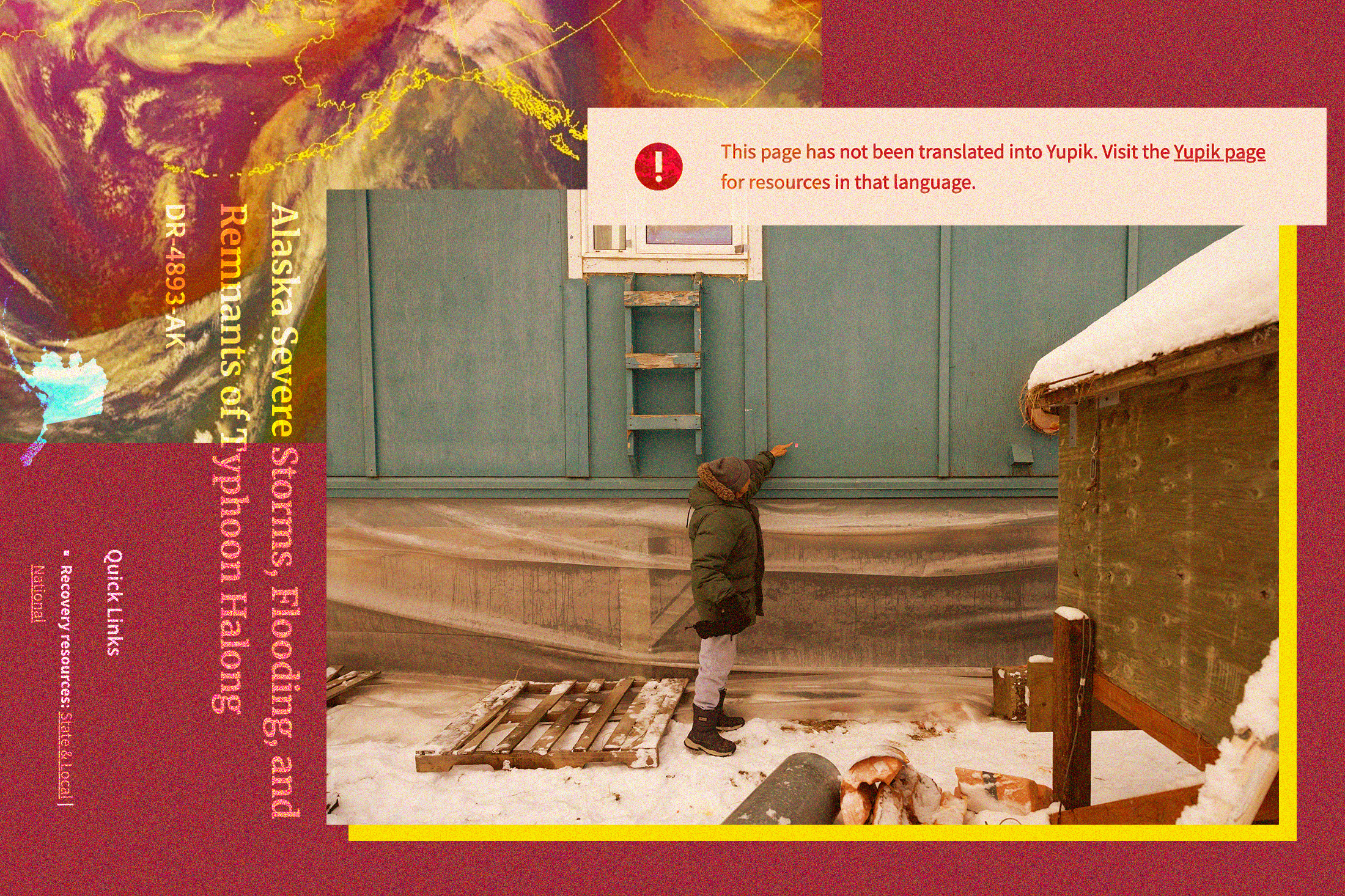

After devastating storms swept through remote villages across Western Alaska in 2022, the Federal Emergency Management Agency (FEMA) faced a critical challenge: ensuring accessible disaster aid for communities where English is not the primary language. To bridge this communication gap, FEMA contracted a California-based company to translate applications for financial assistance. This was a crucial task in the Yukon-Kuskokwim Delta, a sprawling constellation of small Alaska Native communities, where nearly half the population – approximately 10,000 people – learn Yugtun, the Central Yup’ik dialect, before acquiring English proficiency. Farther north, an additional 3,000 individuals speak Iñupiaq, underscoring the profound linguistic diversity of the region.

However, the initial attempt at translation proved to be a significant failure. Journalists at KYUK, the local public radio station, discovered that the translated materials distributed to residents were largely unintelligible. Julia Jimmie, a Yup’ik speaker and translator at KYUK, described the content as "Yup’ik words all right, but they were all jumbled together, and they didn’t make sense." This fundamental breakdown in communication led Jimmie to reflect on a deeper concern: "It made me think that someone somewhere thought that nobody spoke or understood our language anymore." This incident highlighted a pervasive issue of cultural and linguistic insensitivity in federal disaster response, drawing national attention and prompting a civil rights investigation into FEMA’s practices.

Three years later, the specter of natural disaster has returned to Alaska with Typhoon Halong, whose destructive remnants in mid-October displaced over 1,500 residents and claimed at least one life in the village of Kwigillingok. As communities once again grapple with the aftermath of a catastrophic storm, the critical need for accurate and culturally appropriate communication has resurfaced, but this time, with a new and complex dimension: the role of artificial intelligence (AI) in translating Indigenous languages.

Just days before the Trump administration approved a disaster declaration for Typhoon Halong, on October 21, Prisma International, a Minneapolis-based company, posted an advertisement seeking "experienced, professional Translators and Interpreters" for Yup’ik, Iñupiaq, and other Alaska Native languages. Government records indicate that Prisma has secured over 30 contracts with FEMA in recent years, establishing itself as a significant vendor for the agency. Prisma’s corporate website explicitly states that its tools "combine AI and human expertise to accelerate translation, simplify language access, and enhance communication across audiences, systems, and users." Further raising questions, the job listing for Alaska Native language translators specified that applicants would be required to "provide written translations using a Computer-Assisted Translation (CAT) tool," a term often synonymous with software that incorporates machine translation.

While a spokesperson for FEMA declined to confirm in late October whether the agency intended to contract with Prisma in Alaska for the current disaster response, the job posting itself strongly suggested such a partnership. It listed a preference for applicants with experience translating or interpreting "for emergency management agencies, e.g. FEMA," alongside knowledge of the recent storm and a connection to local Indigenous communities. Moreover, multiple Yup’ik language speakers in Alaska confirmed they had been directly contacted by a Prisma representative, who identified the company as "a language services contractor for the Federal Emergency Management Agency." Julia Jimmie, who was among those contacted, expressed her willingness to translate for FEMA directly but voiced additional concerns about collaborating with Prisma, particularly regarding its reliance on AI.

The expanding integration of AI into various facets of daily life, including translation, has sparked a dichotomy of responses within Indigenous communities globally. On one hand, many Native tech and cultural experts express intrigue regarding AI’s potential, especially for the vital work of language preservation and revitalization. For languages facing extinction, AI could offer tools for archiving, learning, and even conversational practice. On the other hand, a profound skepticism persists, rooted in valid concerns that the technology risks distorting invaluable cultural knowledge and could fundamentally threaten the concept of language sovereignty.

A central tenet of this skepticism revolves around data. Morgan Gray, a member of the Chickasaw Nation and a research and policy analyst at Arizona State University’s American Indian Policy Institute, articulated this concern clearly: "Artificial intelligence relies on data to function. One of the bigger risks is that if you’re not careful, your data can be used in a way that might not be consistent with your values as a tribal community." This highlights a critical tension between the data-hungry nature of AI and the deeply held principles of Indigenous data sovereignty – the inherent right of tribal nations to define how their data is collected, governed, and utilized.

Though the U.S. government currently lacks comprehensive formal regulations for AI or its specific applications, the principle of data sovereignty has gained significant traction in international dialogues concerning Indigenous intellectual property and rights. The United Nations Declaration on the Rights of Indigenous Peoples (UNDRIP) explicitly enshrines the need for free, prior, and informed consent for the use of Indigenous cultural knowledge. UNESCO, the United Nations body tasked with safeguarding cultural heritage, has similarly urged AI developers to respect tribal sovereignty when engaging with Indigenous communities and their invaluable data. Gray underscored the necessity for transparency and consent: "A tribal nation needs to have complete information about the way that AI will be used, the type of tribal data that that AI system might use. They need to have time to consider those impacts, and they need to have the right to refuse and say, ‘No, we’re not comfortable with this outside entity using our information, even though you might have a really altruistic motivation behind doing it.’" It remains unclear whether Prisma, in its pursuit of Alaska Native language translators, has formally engaged with tribal leadership in the Yukon-Kuskokwim Delta; the Association of Village Council Presidents, a consortium representing 56 federally recognized tribes in the region, did not respond to requests for comment on this matter.

Prisma’s website indicates that clients retain the option to utilize only human translators, and the company claims its AI usage is governed by an "AI Responsible Usage Policy." However, the specifics of this policy are not publicly accessible online, and the company did not respond to inquiries seeking clarification, leaving many questions unanswered regarding the safeguards in place for sensitive cultural and linguistic data.

In the three years since the widely criticized 2022 translation fiasco, FEMA has indeed undertaken efforts to improve its engagement with Alaska Native communities. The rigorous reporting by KYUK on the initial scandal not only triggered a civil rights investigation into the agency’s practices but also led the original California contractor, Accent on Languages, to reimburse FEMA for the demonstrably faulty translations. A FEMA spokesperson confirmed that the agency now exclusively employs "Alaska-based vendors" for Alaska Native language services, prioritizing those situated within disaster-impacted areas to ensure local expertise and responsiveness. Furthermore, a secondary quality-control review is now mandated for all translations. The agency stated that "Tribal partners are continuously consulted to determine language services needs and how FEMA can meet those needs in the most effective and accessible manner."

Despite these commendable adjustments, FEMA’s policies concerning AI remain notably less defined. The agency’s email response did not directly address specific questions about whether FEMA has established guidelines to regulate AI use or to explicitly protect Indigenous data sovereignty. Instead, it offered a broader assurance that FEMA "works closely with tribal governments and partners to make sure our services and outreach are responsive to their needs," a statement that falls short of detailing concrete AI governance frameworks.

Government records indicate that Prisma has contracted with FEMA in more than a dozen states across the U.S. The company’s website even highlights a case study where its "LexAI" technology was instrumental in helping a federal agency disseminate disaster relief information in numerous languages, including "rare Pacific Island dialects," following a wildfire event. While Prisma has a history of working with various federal agencies, it appears this marks its first recorded federal government contract specifically within Alaska, raising fresh concerns about its approach to the region’s unique linguistic landscape.

Among Yup’ik language translators in the Y-K Delta contacted by Prisma, a practical and profound concern emerged: the fundamental accuracy of AI in translating their intricate language. "Yup’ik is a complex language," Julia Jimmie explained, emphasizing its agglutinative structure where suffixes are added to root words to convey extensive meaning, forming words that can function as entire sentences in English. "I think that AI would have problems translating Yup’ik. You have to know what you’re talking about in order to put the word together."

This challenge is not unique to Yup’ik. Most AI models, particularly those for machine translation, rely on vast datasets of existing translated text to learn patterns and produce accurate output. Such extensive, high-quality data is rarely available for Indigenous languages, which often have smaller speaker populations and limited digital presence. Consequently, AI has a documented poor record when attempting to translate these languages, frequently generating inaccurate sentences, nonsensical phrases, or even completely fabricated words, undermining the very purpose of translation.

Sally Samson, a Yup’ik speaker and professor of Yup’ik language and culture at the University of Alaska Fairbanks, voiced profound skepticism about AI’s ability to master Yugtun syntax, which differs dramatically from English. Her apprehension extended beyond mere factual misinformation; she worried about the technology’s inability to convey the nuanced Yup’ik worldview and cultural values embedded within the language. "Our language explains our culture, and our culture defines our language," Samson asserted. "The way we communicate with our elders and our co-workers and our friends is completely different because of the values that we hold, and that respect is very important." The loss of these subtle cultural expressions in translation can lead to a profound misunderstanding, impacting how aid is sought, received, and utilized by disaster-affected communities.

Paradoxically, while external deployment of AI raises concerns, Indigenous software developers are actively engaged in leveraging AI to address some of its shortcomings around Native languages, specifically for revitalization efforts. This distinction is critical: these initiatives are driven and controlled by Indigenous communities themselves. For instance, an Anishinaabe roboticist has developed a robot designed to help children learn Anishinaabemowin, while a Choctaw computer scientist created a chatbot capable of conversing in Choctaw. In these cases, Indigenous people are not just the beneficiaries but the architects, making crucial decisions about the technology’s development, application, and ethical boundaries.

However, the introduction of AI by external private companies, particularly those contracting with federal agencies, introduces a distinct layer of complexity and potential for exploitation. Crystal Hill-Pennington, who teaches Native law and business at the University of Alaska Fairbanks and provides legal consultation to Alaska tribes, expressed deep concern about the potential for exploitation if AI models are trained on the work of Indigenous translators, with that data then being used for future commercial benefit by non-Native companies. "If we have communities that have a historical socioeconomic disadvantage, and then companies can come in, gather a little bit of information, and then try to capitalize on that knowledge without continuing to engage the originating community that holds that heritage, that’s problematic," she warned.

Native communities possess centuries of experience with outsiders extracting and exploiting their cultural knowledge. A recent, stark precedent for this kind of controversy occurred in 2022, when the Standing Rock Sioux Tribal Council took the extraordinary step of voting to banish a nonprofit organization that had initially promised to assist in preserving their language. After Lakota elders had spent years sharing their invaluable cultural knowledge with the non-Native group, the organization controversially copyrighted the material and subsequently attempted to sell it back to tribal members in the form of textbooks. This incident underscores the profound distrust that can arise when intellectual property rights over Indigenous knowledge are not respected.

Hill-Pennington emphasized that the advent of AI, particularly its capacity for data scraping and model training, adds another intricate layer to contemporary discussions surrounding intellectual property and cultural heritage. "The question is, who ends up owning the knowledge that they’re scraping?" she pondered, highlighting the need for clarity and robust legal frameworks.

Standards regarding AI and Indigenous cultural knowledge are rapidly evolving, mirroring the swift pace of technological advancement. Hill-Pennington acknowledged that some companies utilizing AI may still be unfamiliar with the fundamental expectation of informed consent and the critical concept of data sovereignty. Nevertheless, she stressed that these standards are becoming increasingly pertinent and unavoidable. "Particularly if they’re going to be doing work with, let’s say, a federal agency that does fall under executive orders around authentic consultation with Indigenous peoples in the United States, then this is not something that should be overlooked," she concluded, underscoring the urgent need for federal agencies to establish clear, ethical guidelines for AI use that prioritize the sovereignty, rights, and cultural integrity of Indigenous communities.