In 2022, a critical failure in communication left thousands of Alaska Native residents in Western Alaska struggling to access vital federal disaster aid. Following devastating historic storms, the Federal Emergency Management Agency (FEMA) engaged a California-based contractor, Accent on Languages, to translate applications for financial assistance. This region, encompassing the vast Yukon-Kuskokwim Delta, is home to a constellation of small Alaska Native communities where nearly half of the approximately 10,000 residents speak Yugtun, the Central Yup’ik dialect, as their first language, often before learning English. Further north, roughly 3,000 individuals communicate in Iñupiaq. However, when local journalists at public radio station KYUK attempted to review the translated materials, they discovered them to be unintelligible. Julia Jimmie, a Yup’ik speaker and translator for KYUK, characterized the translations as "Yup’ik words all right, but they were all jumbled together, and they didn’t make sense," reflecting a disheartening perception that their language was no longer understood or respected by external entities. This glaring inadequacy prompted a civil rights investigation into FEMA’s practices and led to the contractor reimbursing the agency for the faulty work, underscoring the profound impact of linguistic barriers on vulnerable populations in times of crisis.

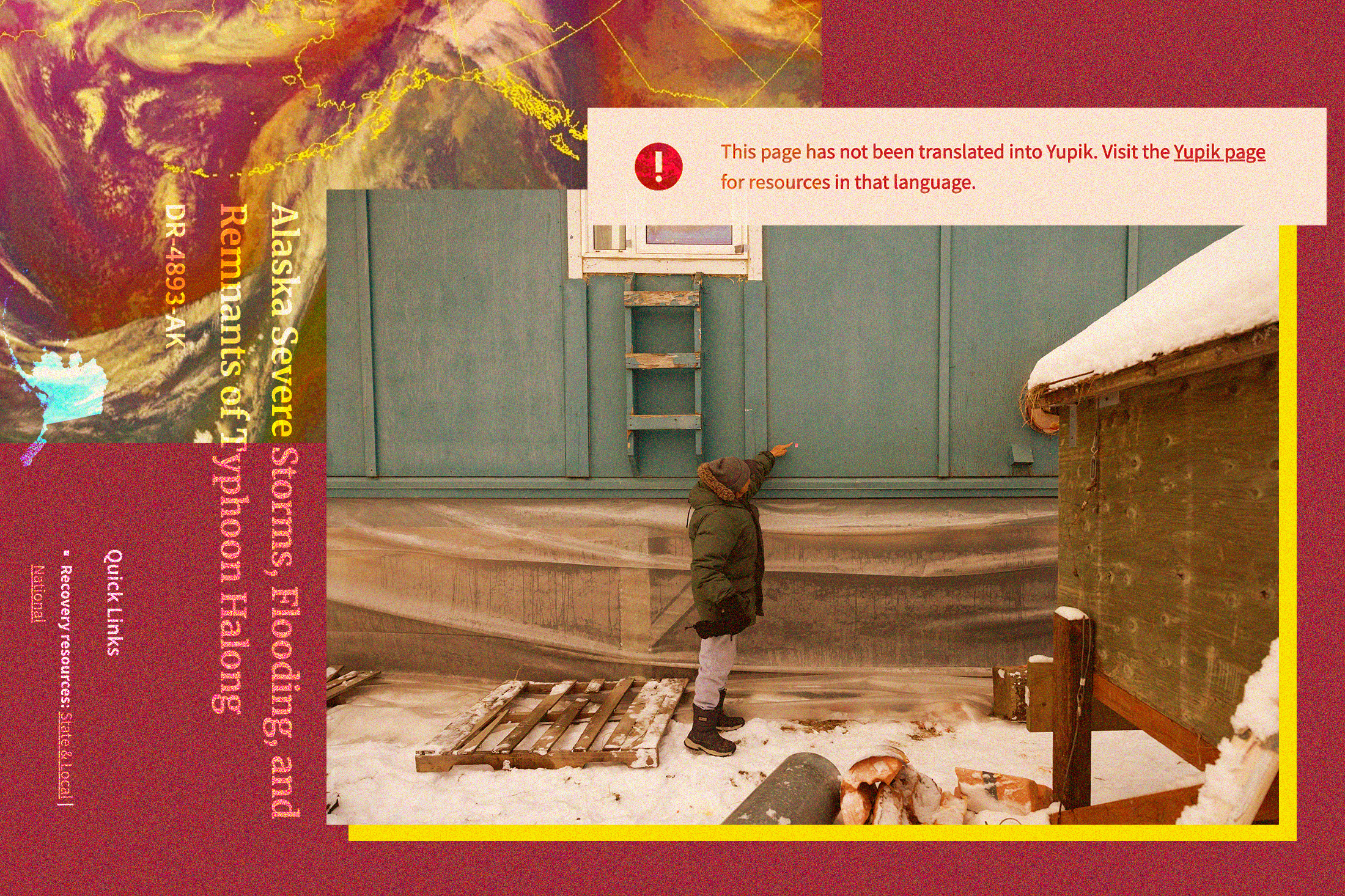

Just three years later, the specter of inadequate translation looms large once more as the region grapples with the aftermath of another catastrophic event. In mid-October, the remnants of Typhoon Halong unleashed havoc across Western Alaska, displacing more than 1,500 residents and claiming at least one life in the village of Kwigillingok. This latest disaster has reignited urgent questions about language access in emergency response, particularly concerning the burgeoning role of artificial intelligence (AI) in serving Indigenous communities. As residents navigate the complex process of applying for federal assistance, a new contractor, Prisma International, a Minneapolis-based company, has entered the fray, sparking a fresh wave of concern.

Prisma International, which government records show has secured over 30 contracts with FEMA in recent years, posted an advertisement on October 21 — the day before the Trump administration approved a disaster declaration for Typhoon Halong — seeking "experienced, professional Translators and Interpreters" for Yup’ik, Iñupiaq, and other Alaska Native languages. The company’s public profile highlights its strategy of combining "AI and human expertise to accelerate translation, simplify language access, and enhance communication." Specifically, the job listing for Alaska Native language translators stipulated the use of "Computer-Assisted Translation (CAT) tool," signaling a reliance on AI-driven technologies.

While FEMA declined to confirm whether it had officially contracted Prisma for the Alaska response, the job posting’s preference for applicants with experience translating for "emergency management agencies, e.g. FEMA," alongside requirements for knowledge of the recent storm and connections to local Indigenous communities, strongly suggested a forthcoming engagement. Indeed, several Yup’ik language speakers in Alaska confirmed receiving direct outreach from a company representative, who explicitly identified Prisma as "a language services contractor for the Federal Emergency Management Agency." Julia Jimmie, who had previously exposed the 2022 translation failures, was among those contacted, expressing willingness to assist FEMA but raising pertinent questions regarding the specific methodologies employed by Prisma.

The increasing integration of AI into various facets of daily life, including language services, has elicited a mixed response within Indigenous communities globally. On one hand, many Native tech and cultural experts acknowledge AI’s intriguing potential, especially in the critical domain of language preservation and revitalization. For languages facing endangerment, AI could offer innovative tools for documentation, learning, and cultural transmission. However, this optimism is tempered by deep-seated skepticism and significant apprehension that the technology, if improperly applied, risks distorting invaluable cultural knowledge and undermining the fundamental principles of language sovereignty.

Morgan Gray, a member of the Chickasaw Nation and a research and policy analyst at Arizona State University’s American Indian Policy Institute, articulates a core concern: "Artificial intelligence relies on data to function. One of the bigger risks is that if you’re not careful, your data can be used in a way that might not be consistent with your values as a tribal community." This statement underscores the critical concept of "data sovereignty"—the inherent right of a tribal nation to define and control how its collective data is gathered, managed, and utilized. This principle is not merely theoretical; it is increasingly central to international discourse surrounding Indigenous intellectual property rights, finding resonance in instruments like the United Nations Declaration on the Rights of Indigenous Peoples, which mandates free, prior, and informed consent for the use of Indigenous cultural knowledge. UNESCO, the United Nations body tasked with safeguarding cultural heritage, has also advocated for AI developers to respect tribal sovereignty when engaging with Indigenous communities’ data. Gray stresses that tribal nations require "complete information about the way that AI will be used, the type of tribal data that that AI system might use," along with sufficient time for deliberation and, crucially, "the right to refuse."

The opacity surrounding Prisma’s engagement with local Indigenous leadership further compounds these concerns. As of late October, it remained unclear whether the company had initiated contact with tribal leadership in the Yukon-Kuskokwim Delta. The Association of Village Council Presidents, a consortium representing 56 federally recognized tribes in the region, did not respond to inquiries for comment, leaving a vacuum of information regarding tribal consultation. Prisma’s website indicates that clients can opt for exclusively human translators and asserts that its AI usage is governed by an "AI Responsible Usage Policy." However, the specific details of this policy are not publicly accessible, and the company did not respond to requests for clarification.

In the wake of the 2022 translation debacle, FEMA has reportedly undertaken efforts to improve its engagement with Alaska Native communities. The KYUK reporting that exposed the initial scandal prompted a civil rights investigation, leading to significant changes. A FEMA spokesperson stated that the agency now exclusively employs "Alaska-based vendors" for Alaska Native languages, prioritizing those within disaster-impacted areas, and has implemented a mandatory secondary quality-control review for all translations. The agency also affirmed that "Tribal partners are continuously consulted to determine language services needs and how FEMA can meet those needs in the most effective and accessible manner." Despite these commendable steps, FEMA’s policies regarding AI remain ambiguous. The agency’s response did not directly address questions about specific regulations governing AI use or measures to protect Indigenous data sovereignty, offering only a general assurance that FEMA "works closely with tribal governments and partners to make sure our services and outreach are responsive to their needs."

Prisma’s broader operational footprint extends beyond Alaska, with government records indicating FEMA contracts in more than a dozen states. The company’s website features a case study illustrating its "LexAI" technology’s application in assisting a federal agency to disseminate disaster relief information in over 16 languages, including "rare Pacific Island dialects," following a wildfire. While Prisma has collaborated with several other federal agencies, its current engagement would mark its first known federal contract specifically within Alaska.

For Yup’ik language speakers in the Y-K Delta, the practical efficacy of AI translation poses immediate concerns. Julia Jimmie highlighted the intricate nature of Yup’ik, stating, "Yup’ik is a complex language. I think that AI would have problems translating Yup’ik. You have to know what you’re talking about in order to put the word together." Her skepticism is well-founded within the linguistic community. Current AI models for translation heavily rely on extensive data sets to achieve accuracy. However, such comprehensive linguistic data is often scarce for Indigenous languages, rendering them "low-resource languages" for AI development. Consequently, AI has a documented poor track record in translating these languages, frequently producing inaccurate sentences, culturally inappropriate phrasing, or even fabricating words, which could have dire consequences in critical disaster relief communications.

Sally Samson, a Yup’ik professor of language and culture at the University of Alaska Fairbanks, voiced profound doubts about AI’s ability to master Yugtun syntax, which deviates significantly from English grammatical structures. Her concerns extend beyond mere factual inaccuracies to the profound risk of misrepresenting the Yup’ik worldview. "Our language explains our culture, and our culture defines our language," Samson eloquently articulated. "The way we communicate with our elders and our co-workers and our friends is completely different because of the values that we hold, and that respect is very important." The potential for AI to strip away these vital cultural nuances in emergency messaging represents a significant threat to the holistic well-being of the community.

Conversely, Indigenous software developers are actively engaged in addressing AI’s limitations concerning Native languages, often with the express goal of language revitalization. An Anishinaabe roboticist, for instance, has innovatively designed a robot to facilitate children’s learning of Anishinaabemowin, while a Choctaw computer scientist has created a chatbot enabling conversations in Choctaw. The crucial distinction in these initiatives is that Indigenous individuals are at the forefront of developing these AI models and determining their ethical application, ensuring cultural integrity and community benefit.

However, Crystal Hill-Pennington, who teaches Native law and business at the University of Alaska Fairbanks and provides legal consultation to Alaska tribes, cautions against the potential for exploitation when non-Native companies introduce AI. She worries about the risk of AI models being trained on the invaluable work of Indigenous translators, with the resulting intellectual property then potentially capitalized upon by external entities without sustained engagement or equitable benefit to the originating communities. "If we have communities that have a historical socioeconomic disadvantage, and then companies can come in, gather a little bit of information, and then try to capitalize on that knowledge without continuing to engage the originating community that holds that heritage, that’s problematic," Hill-Pennington stated. This concern echoes centuries of Indigenous communities experiencing the extraction and exploitation of their cultural knowledge. A recent precedent highlights this risk: in 2022, the Standing Rock Sioux Tribal Council famously banished a nonprofit organization that, after years of Lakota elders sharing cultural knowledge, copyrighted the material and attempted to sell it back to tribal members in textbook format.

Hill-Pennington emphasizes that the introduction of AI by private corporations injects an additional layer of complexity into contemporary discussions surrounding intellectual property and cultural heritage. "The question is, who ends up owning the knowledge that they’re scraping?" she queries, highlighting a fundamental unresolved issue. Standards governing AI’s interaction with Indigenous cultural knowledge are rapidly evolving, mirroring the pace of technological advancement itself. While some companies utilizing AI may still be unfamiliar with the critical expectation of informed consent and the concept of data sovereignty, Hill-Pennington asserts that these standards are becoming increasingly pertinent, especially for entities collaborating with federal agencies that are bound by executive orders mandating authentic consultation with Indigenous peoples in the United States. In such contexts, overlooking these crucial considerations is no longer acceptable. The ongoing challenges in Western Alaska serve as a stark reminder of the urgent need for transparent, ethical, and culturally informed approaches to technology deployment in vulnerable communities worldwide.